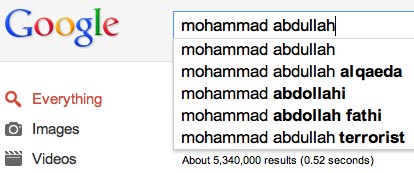

You’re a 16-year-old Muslim kid in America. Say your name is Mohammad Abdullah. Your schoolmates are convinced that you’re a terrorist. They keep typing in Google queries likes “is Mohammad Abdullah a terrorist?” and “Mohammad Abdullah al Qaeda.” Google’s search engine learns. All of a sudden, auto-complete starts suggesting terms like “Al Qaeda” as the next term in relation to your name. You know that colleges are looking up your name and you’re afraid of the impression that they might get based on that auto-complete. You are already getting hostile comments in your hometown, a decidedly anti-Muslim environment. You know that you have nothing to do with Al Qaeda, but Google gives the impression that you do. And people are drawing that conclusion. You write to Google but nothing comes of it. What do you do?

You’re a 16-year-old Muslim kid in America. Say your name is Mohammad Abdullah. Your schoolmates are convinced that you’re a terrorist. They keep typing in Google queries likes “is Mohammad Abdullah a terrorist?” and “Mohammad Abdullah al Qaeda.” Google’s search engine learns. All of a sudden, auto-complete starts suggesting terms like “Al Qaeda” as the next term in relation to your name. You know that colleges are looking up your name and you’re afraid of the impression that they might get based on that auto-complete. You are already getting hostile comments in your hometown, a decidedly anti-Muslim environment. You know that you have nothing to do with Al Qaeda, but Google gives the impression that you do. And people are drawing that conclusion. You write to Google but nothing comes of it. What do you do?

This is guilt through algorithmic association. And while this example is not a real case, I keep hearing about real cases. Cases where people are algorithmically associated with practices, organizations, and concepts that paint them in a problematic light even though there’s nothing on the web that associates them with that term. Cases where people are getting accused of affiliations that get produced by Google’s auto-complete. Reputation hits that stem from what people _search_ not what they _write_.

It’s one thing to be slandered by another person on a website, on a blog, in comments. It’s another to have your reputation slandered by computer algorithms. The algorithmic associations do reveal the attitudes and practices of people, but those people are invisible; all that’s visible is the product of the algorithm, without any context of how or why the search engine conveyed that information. What becomes visible is the data point of the algorithmic association. But what gets interpreted is the “fact” implied by said data point, and that gives an impression of guilt. The damage comes from creating the algorithmic association. It gets magnified by conveying it.

- What are the consequences of guilt through algorithmic association?

- What are the correction mechanisms?

- Who is accountable?

- What can or should be done?

Note: The image used here is Photoshopped. I did not use real examples so as to protect the reputations of people who told me their story.

Update: Guilt through algorithmic association is not constrained to Google. This is an issue for any and all systems that learn from people and convey collective “intelligence” back to users. All of the examples that I was given from people involved Google because Google is the dominant search engine. I’m not blaming Google. Rather, I think that this is a serious issue for all of us in the tech industry to consider. And the questions that I’m asking are genuine questions, not rhetorical ones.